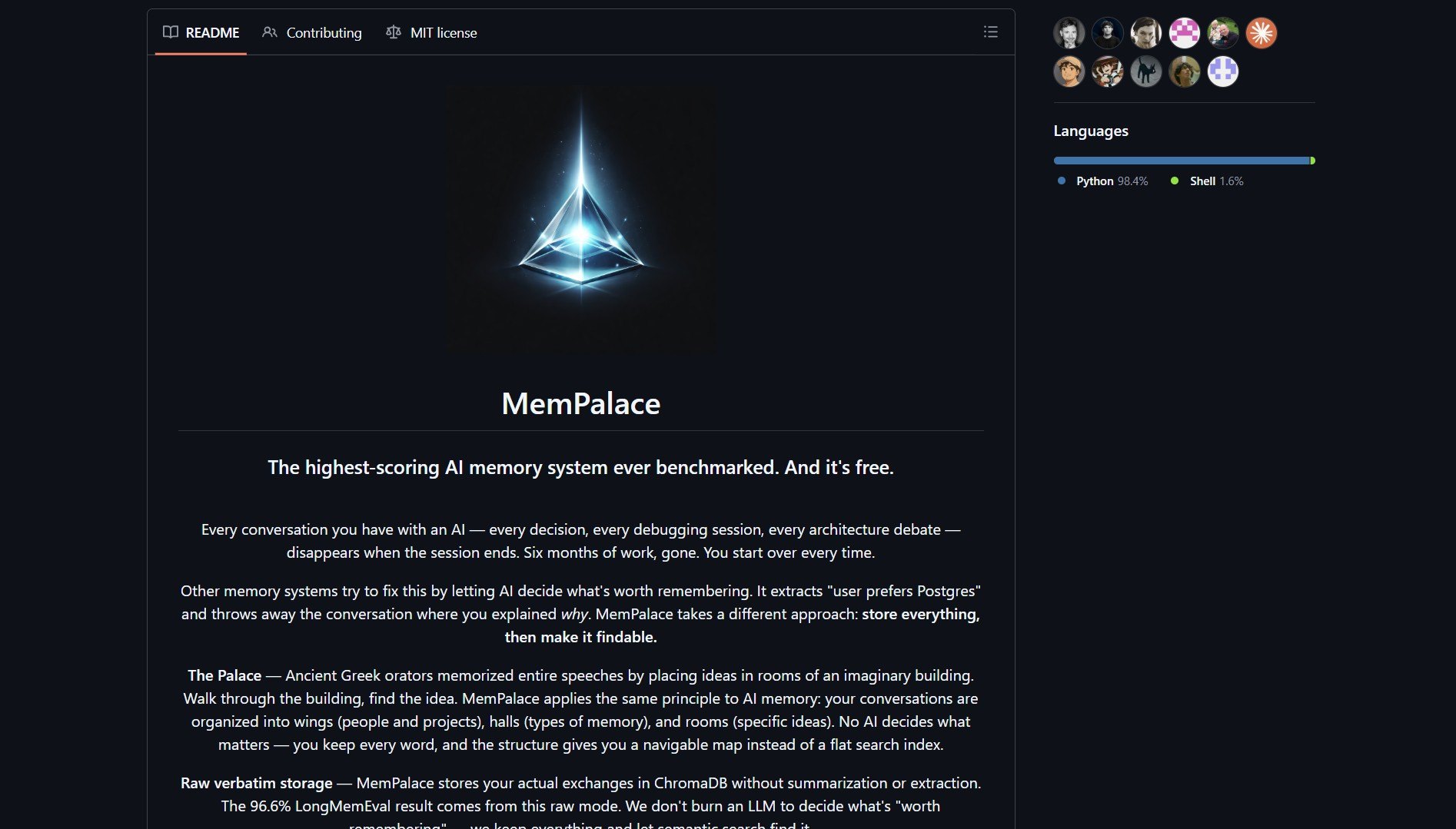

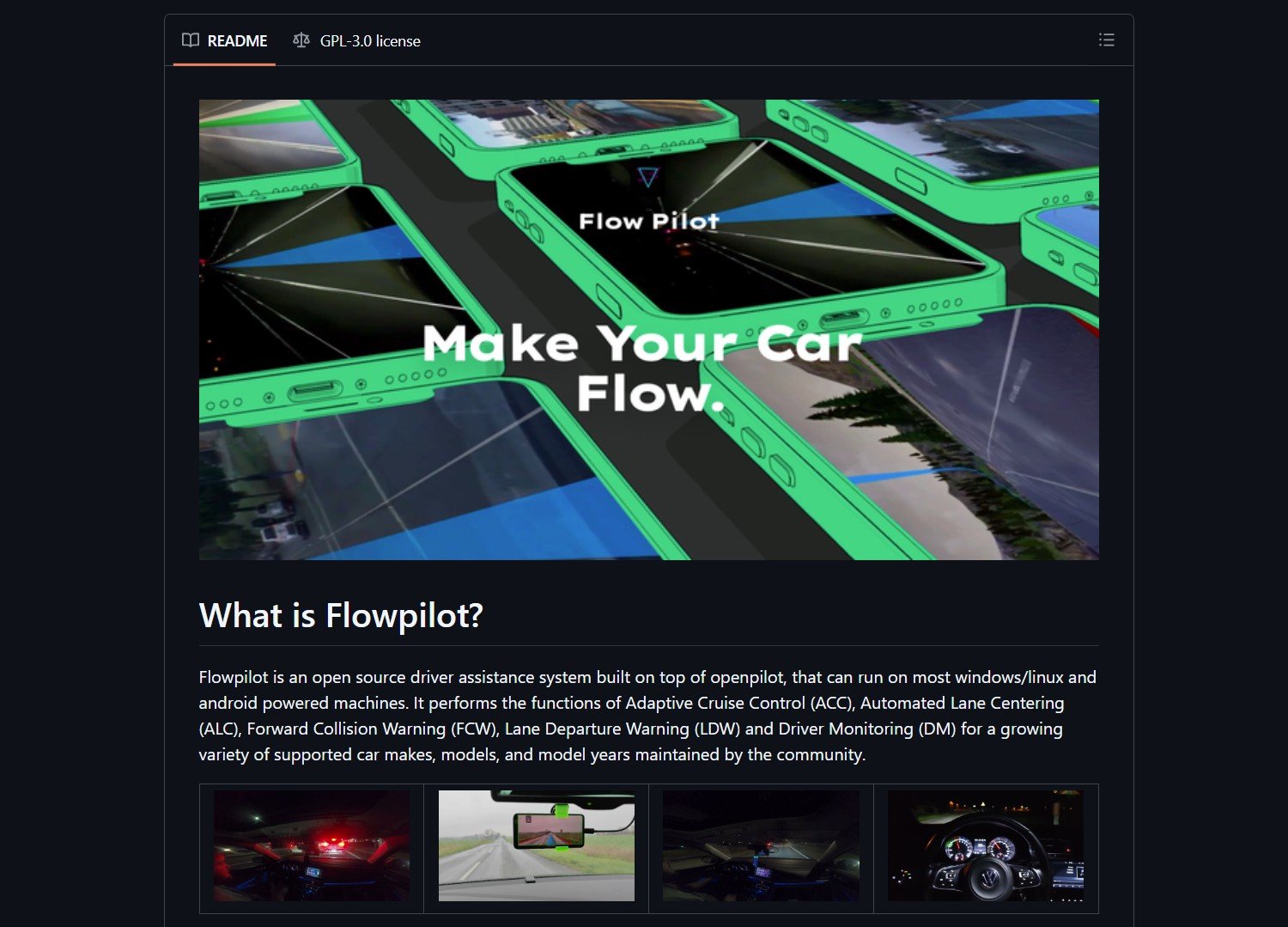

PaperBanana is a multi-agent framework for automated academic illustration generation. It transforms raw scientific content into publication-quality diagrams and plots. The system acts like a creative team of specialized agents. Researchers save hours of manual diagram creation time. PaperBanana produces aesthetically pleasing and semantically accurate visuals.

The framework orchestrates five specialized agents in a structured pipeline. These include Retriever, Planner, Stylist, Visualizer, and Critic agents. Each agent handles a specific step of the illustration process. The system uses in-context learning from reference examples. Iterative refinement ensures high-quality output for scientific papers.

PaperBanana supports various image generation models via OpenRouter integration. Users can choose from OpenAI, Anthropic, and other providers. The tool is open source under the Apache 2.0 license. A Hugging Face Spaces demo allows quick testing without installation. The project aims to facilitate academic illustration for all researchers.

Key Features and Capabilities

PaperBanana delivers reference-driven illustration generation through a multi-agent pipeline. The Retriever agent identifies relevant diagrams from a curated collection. The Planner agent translates method content into textual descriptions. The Stylist agent refines descriptions for academic aesthetics. The Visualizer agent generates images using state-of-the-art models.

The Critic agent provides closed-loop refinement through iterative improvements. The framework supports OpenRouter for unified access to multiple AI models. Users can select both main vision-language models and image generation models. The system includes a Streamlit web interface for easy interaction. Pre-configured style guidelines ensure publication-ready visuals.

Customer Persona

This tool is for AI scientists and academic researchers writing papers. Students creating theses and dissertations benefit from automated diagrams. University professors preparing lecture materials save time. Conference presenters need professional visuals for slides. Journal authors require publication-ready illustrations.

Research teams collaborating on papers use PaperBanana for consistency. Developers building academic tools integrate its pipeline, similar to those using LangChain for scalable AI agents. Open science advocates appreciate the reproducible illustration process. Non-designers in technical fields get professional results. Anyone who needs scientific diagrams faster will find value.

Project link:

https://github.com/dwzhu-pku/PaperBanana

How to Deploy and How It Works

Deploy PaperBanana by cloning the GitHub repository. Use git clone https://github.com/dwzhu-pku/PaperBanana.git. Navigate to the PaperBanana directory with cd PaperBanana. Duplicate the configuration template file to configs/model_config.yaml. Add your API keys for OpenRouter or other model providers.

Run the Streamlit UI with streamlit run app.py. Access the web interface at http://localhost:8501. Input your scientific content and communicative intent. Select reference diagrams from the curated collection. Choose visual styles and generate illustrations in minutes.

The pipeline retrieves relevant reference diagrams first. The planner agent translates content into textual descriptions. The stylist agent refines descriptions for academic aesthetics. The visualizer agent generates images using selected models. The critic agent provides iterative refinement through multiple rounds.

Market Analysis

The academic illustration tools market includes manual options like Inkscape and Adobe Illustrator. Automated solutions are rare, with most being proprietary or limited. PaperBanana stands out with its multi-agent framework and open-source approach. The integration with OpenRouter provides model flexibility. The project’s presence on Hugging Face Spaces increases accessibility.

Demand for automated scientific visualization grows as paper submissions increase. Researchers seek tools that reduce non-research overhead. The AI community values reproducible and transparent tools. PaperBanana’s reference-driven approach ensures domain appropriateness. Its modular design allows future expansion beyond computer science, much like Openhands for scalable AI agent development.

Advertising Section

For academic illustration needs, consider pairing PaperBanana with reference management tools. Cloud GPU providers offer scalable inference for image generation. Academic writing platforms could integrate PaperBanana as a plugin. Training workshops on scientific visualization complement the tool. Enterprise support may be available for institutional deployment.

The Verdict

PaperBanana solves a real pain point: creating academic illustrations manually. Its multi-agent pipeline produces publication-quality diagrams efficiently. The open-source nature and Hugging Face demo lower the barrier to entry. Researchers should try PaperBanana even for simple diagram needs. The framework represents a step toward fully automated scientific communication.

There is no video demo for this article.