I ran into this repo on GitHub and had to stop, because Nanobot is an ultra-light clawdbot-style assistant that boots in under a minute. Where Clawdbot requires 430,000 plus lines of code to run, Nanobot delivers the same core agent loop in roughly 4,000 lines — a dramatic reduction in complexity and surface area.

What is Nanobot?

Nanobot is a minimal, research-friendly agent framework from HKUDS that focuses on readability, speed, and low resource usage. The repository demonstrates a compact core agent loop, real-time tooling to count lines (core_agent_lines.sh), and ergonomics that make the codebase approachable for researchers and engineers who want to build personal AI agents without framework bloat.

Note: Line count comparisons are indicative, not a full measure of capability. A smaller codebase reduces maintenance overhead and attack surface, but you should validate features and stability for your use case.

Customer Persona

The typical Nanobot user is a machine learning researcher or software engineer who needs a lightweight, inspectable agent framework for prototyping AI behaviors. They value code readability, fast iteration cycles, and minimal dependencies over production-ready safety features. This persona often works in academic or R&D environments where they need to experiment with agent loops without the overhead of larger frameworks like LangChain or AutoGPT.

Market Analysis

Nanobot enters a crowded market of AI agent frameworks, competing with heavyweights like LangChain, AutoGPT, and Clawdbot. Its differentiation lies in its extreme minimalism—4,000 lines versus 430,000—making it uniquely suited for research and educational use. While it lacks the battle-tested robustness and ecosystem of larger frameworks, its small size allows for full auditability and customization, similar to the approach used by the OpenHands development platform for building and scaling AI agents. For production deployments, teams would likely still choose more mature options, but Nanobot fills a niche for rapid prototyping and agent architecture studies.

Key Features

- Ultra-Lightweight — small codebase that starts fast and is easy to inspect

- Research-Ready — readable structure makes experimentation straightforward

- Lightning Fast Startup — no massive dependency load, quick iterations

- One-Click Deploy — minimal setup to get the agent loop running

How It Works

At a high level, Nanobot implements the standard agent loop with tight, explicit components and a small set of adapters for tools and IO. The project emphasizes compact state encodings and a minimal runtime so you can reason about behavior in a single afternoon.

git clone https://github.com/HKUDS/nanobot

cd nanobot

# inspect line count and core scripts

bash core_agent_lines.sh

# run the agent as documented in the README| Component | Purpose |

|---|---|

| Core loop | The minimal event/task loop that drives agent decisions |

| Adapters | Lightweight connectors for tools, IO, and external APIs |

| Utilities | Helper scripts, including the line counting tool |

| Tests / Examples | Small demos to exercise typical agent flows |

Tip: Run bash core_agent_lines.sh to verify the real-time line count, and use the small demos to understand the agent’s action model before extending it.

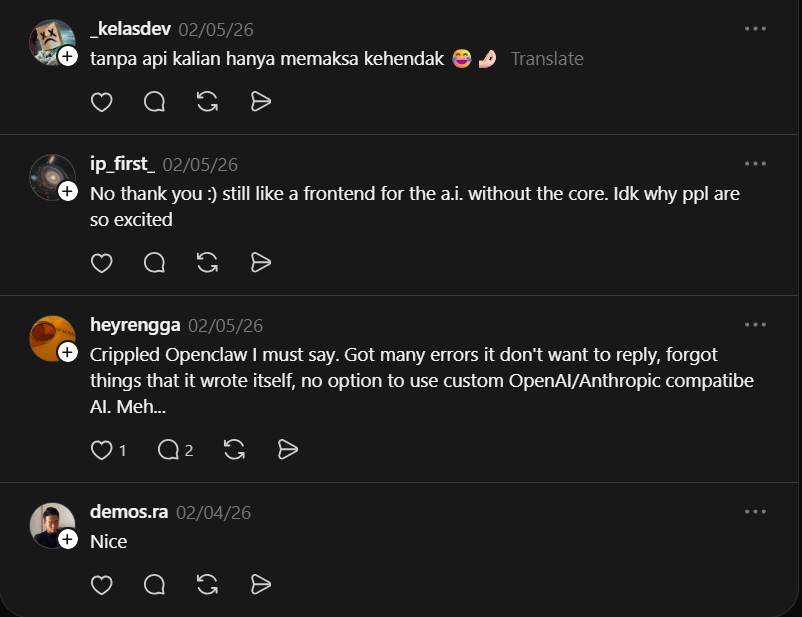

Community Reactions

“No thank you 🙂 still like a frontend for the a.i. without the core. Idk why ppl are so excited” — @ip_first_

“Crippled Openclaw I must say. Got many errors it don’t want to reply, forgot things that it wrote itself, no option to use custom OpenAI/Anthropic compatibe AI. Meh…” — @heyrengga

“honestly feels fine but not magic. efficiency is decent once you tune it, security seems ok if you lock down perms and don’t run it with way more access than needed. still wouldn’t trust it blindly on prod without babysitting a bit lol.” — u/bjxxjj

“I think it’s still a bit early to have a definitive take, but so far it feels promising with some caveats…” — u/LightCellStudio_es

Project Link

https://github.com/HKUDS/nanobot

Warning: Minimal frameworks lower complexity, but they may omit hardened defaults or safety guards you expect in larger stacks. Do not run on sensitive systems without appropriate sandboxing and access controls.

Final Thoughts

What caught my attention is the engineering tradeoff: by shrinking the codebase you gain inspectability and speed, which is great for research and prototyping. If you adopt Nanobot for anything beyond experimentation, build a safety layer, add monitoring, and validate behavior under representative workloads.